The Wizard versus Muggles

There was a lot of pre-release speculation and rumors about the iPhone 6s camera. As usual, some of them turned out to be true, while others were just wishful thinking and perhaps ideas of the functionalities of the next Apple smartphone generation. As a photographer, I decided to see for myself what its camera was really like and for the first time I bought the newest iPhone — the iPhone 6s — on its launch day.

I always tell people that the camera is one of the most important elements of the iPhone for me. I’ve been using an iPhone 6 for a year now and was curious whether the newer camera could be a reason to upgrade to the latest iPhone generation. Or perhaps it’s a better time for current iPhone 5s owners to change to the new device? To answer these questions, I took dozens of photos with the iPhone 6s, 6 and 5s cameras. The last two models — the 6 and 5s — are the iPhone generations with owners who are going to be the most interested in upgrading. All of the smartphones tested had iOS 9.0.1 installed. I divided the test into several parts, each of which shows the capabilities of each camera in different aspects:

- operation under different light conditions

- bokeh (background blur)

- noise

- sharpness

- color representation

- panorama

- flash

- selfie

Before we dive into the pictures and comparisons, let’s try to summarize the changes introduced in the iOS camera app interface on the iPhone 6s running the latest version of iOS 9. Initially it appears that not much has changed, but the trained eye will immediately notice a new element in the middle of the upper bar, used to activate the Live Photo mode. When I first saw the Live Photo effect during the September Apple presentation, I thought the world of Wizards had finally collided with the universe of the Muggles. Live Photos literally animate when you press the 3D Touch display of the iPhone 6s, so our photos can capture not only the moment, but also the accompanying scene movement. We can use a Live Photo as a wallpaper on the lock screen and watch the image "move" as we press the display. These "magical" pictures can be captured with both front and rear cameras. Technically speaking, the Live Photo is a sequence of images consisting of the basic photo and a frame sequence with sound encompassing 1.5 seconds around the moment the photo is taken. Every photo taken in this format takes twice as much storage space as a regular static picture. There’s also an option to convert a Live Photo into a regular image in Edit mode. For the time being, the Live Photos can only be created on the iPhone 6s and 6s Plus, but they can be viewed on all devices running iOS 9 or OS X El Capitan. According to what Apple announced during the September presentation, Instagram and Facebook will soon support previews of Live Photos.

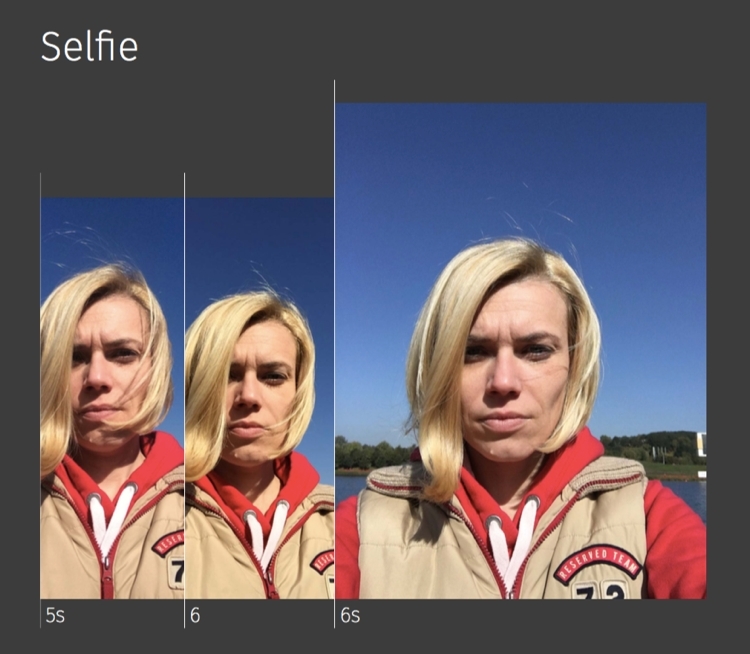

Another novelty is the Retina Flash used by the iPhone 6s FaceTime camera, which allows us all to take even nicer selfies. This seemingly strange solution adopted from SLR camera flashes utilizes preliminary flash technology, in which the camera takes a test shot to determine current parameters of the ambient light and shooting conditions. As a result, the lamp doesn’t always flash with the same light, but adjusts it exactly to current conditions both in terms of light intensity and color temperature. Retina Flash uses the display as the light source, flashing it with three times the intensity it’s possible to set with the regular screen brightness control. The resulting selfie is devoid of unattractive shadows and in general makes a much better impression than self-portraits made with upper (ceiling) or existing (sunlight) lighting. A pleasant side effect of having a relatively large surface area (over 60 cm2 / 9.3 sq. inch for the model 6s and 82 cm2 / 12.7 sq. inch for 6s Plus) for the flash is skin smoothing and reduced reflections on nose, forehead and cheeks.

The iOS 9 Camera app still doesn’t offer the possibility of shooting in the 16:9 widescreen format, but third-party apps like Camera+ can be used instead. As for Burst Mode shooting, already well known from previous iPhone generations, the shooting rate remains unchanged at 10 frames per second, although it is hard not to get the impression that the hardware capabilities of the iPhone 6s go far beyond that limit.

The ”Focus Pixels" technology introduced in the iPhone 6/6 Plus has never been fully explained by Apple, but we can assume with a high level of confidence that it is similar to the phase detection autofocus (AF) system used successfully for the last several years in DSLR cameras. Without going deep into technical details, it’s worth mentioning that the contrast detection autofocus system used previously (up to the iPhone 5s) is much slower and does not ensure proper operation in poor lighting conditions or when viewing uniform surfaces. The tests show it clearly - the 5s AF very often requires the camera to go through all available focus settings only to get back to the best possible position, so that the whole system is slow, capricious and gives the impression of being made of "rubber". To make things even worse, another focus attempt is often made while you’re trying to take a photo, which in practice means the loss of many opportunities to take a good, sharp picture. With the iPhone 6 phase detection-based AF, improvements in operation are noticeable right from the first picture - focusing is predictable, fast and accurate. With the iPhone 6s, the speed and precision of focus is even more impressive. It seems to work instantly with absolute accuracy, even in adverse conditions.

In preparing this year's iPhone generation, Apple also solved the problem of color areas "flooding” into adjacent parts of the image. This problem has afflicted many iPhone photographers for years. In practice, it means that elements of one color pass into another part of the image, blurring sharp boundaries between them. In order to address the phenomenon, the sensors of the latest models use so-called “deep trench isolation” technology, which puts individual pixels in optical isolators preventing the aforementioned blurring.

The iPhone 6s is also the first Apple product capable of recording video in 4K resolution (3840 x 2160 at 30 frames / sec). To imagine the level of detail of 4K video, think of this: in order to represent 4K TV set resolution with Full HD displays, we would need four of the latter put into two columns and two rows. In other words, for each 4K pixel we have to provide four Full HD pixels. This also means that a minute of 4K video will take up much more space than Full HD, 375 MB according to Apple’s tech specs. If you’re going to record more than 30 minutes of 4K video on your iPhone 6s, you should definitely think about getting more storage than the base 16GB.

An example of 4K video recorded on the iPhone 6s can be found here.

Many of the following tests based on image sharpness or the ability of the camera to register as many details as possible naturally favor the iPhone 6s due to the fact it has the largest sensor pixel count to date. That's why I tried to concentrate on other image parameters, under the assumption that the iPhone 6s will always have the best image sharpness.

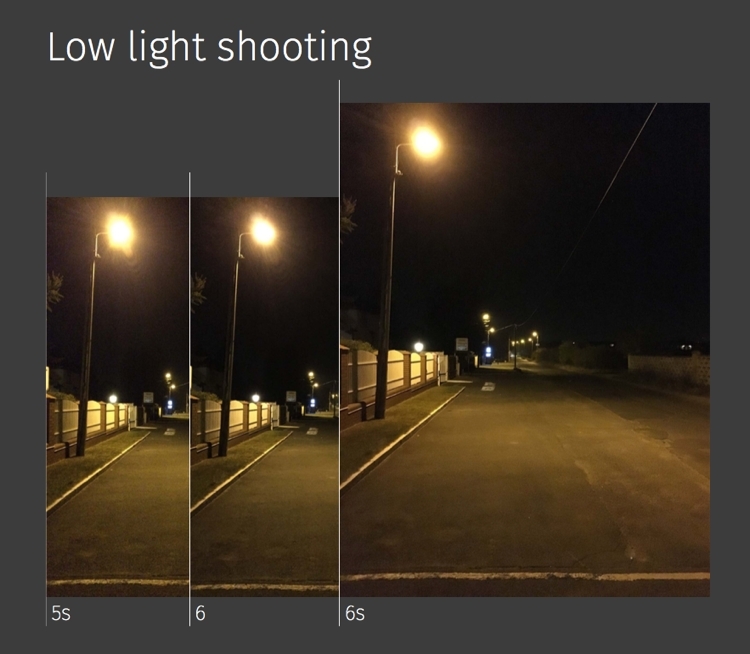

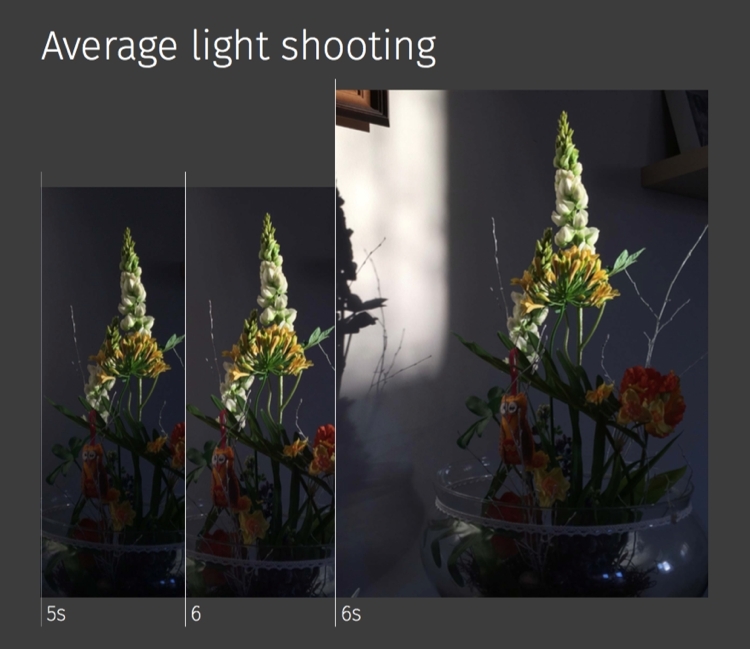

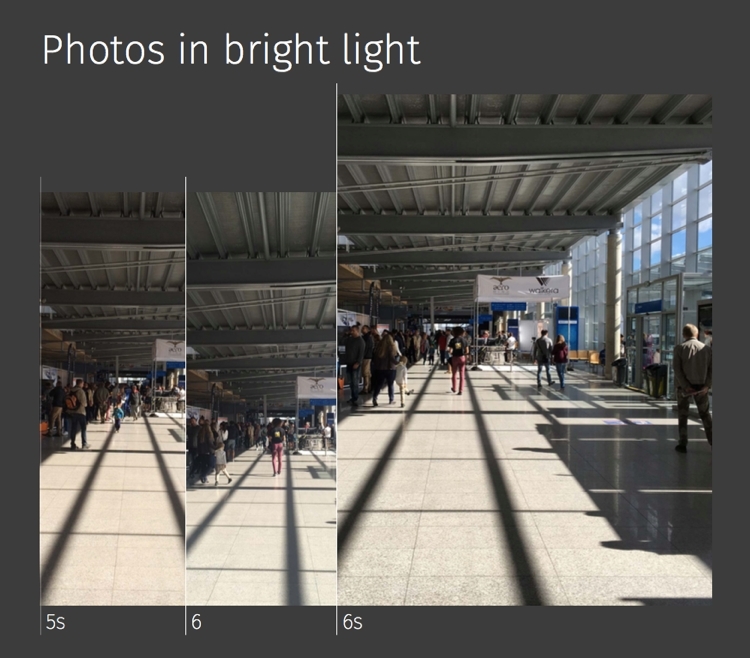

Despite the manual camera mode available since iOS 8, most iPhone photos are still taken in automatic mode. That’s why I decided to test the new iPhone camera automatic mode under three lighting conditions - weak, average and strong light. So, without further ado, it’s now time for the photos and the first comparison.

As expected, photos taken with iPhone 6s contain the most details, although darker areas were captured best by the iPhone 6 camera. There is always some noise in the low-light shots, regardless of the quality of the sensor used, but the photo looks best when that noise is uniform, fine and devoid of color artifacts. That’s the case with the photo taken with the iPhone 6s, which additionally gives the impression that the scaled-down image seems to be smoother and less affected with JPEG compression artifacts.

Composing a scene with one well-lit object and the rest of it in shadow revealed visible differences in the exposure algorithms of each tested smartphone. The iPhone 5s photo shows how little, when compared to 6 and 6s, information was recorded by the camera. On the other hand, the 5s shadow color is the most authentic, but the amount of noise in the shadow remains much higher than for the other two cameras. What’s important is that none of the iPhone cameras have over- or underexposed the image, despite the tricky scene lighting.

The 5s camera has warmed the scene a bit more than the other two models, but this is a well-known characteristic of "5" series devices. The colors of the shadowed areas were reproduced better by the the iPhone 5 and 6s this time, while the iPhone 6 washed blacks a bit. What’s also interesting here is that in the iPhone 6s shot there are visible objects reflecting in the floor - people, windows in the door. This might indicate some changes in the camera lens used in the latest iPhone generation (decreasing the polarization index, for example).

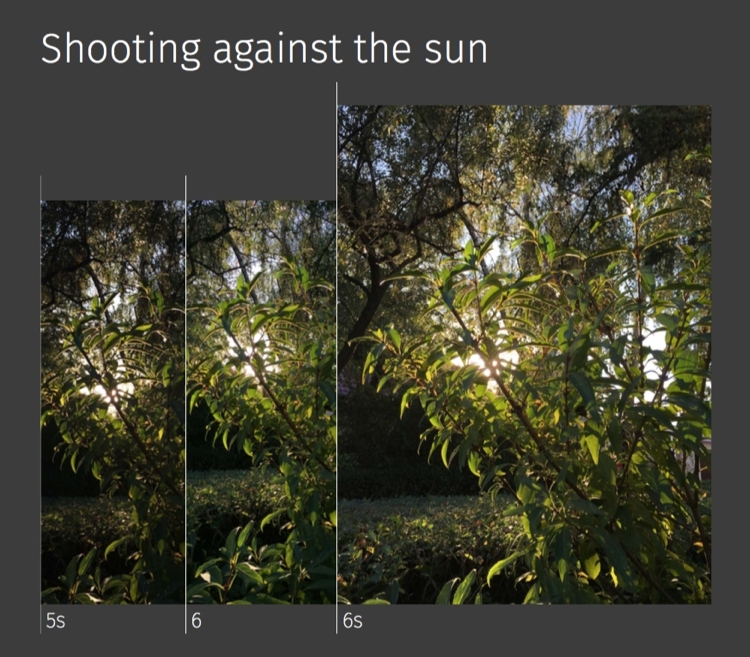

This kind of shot is always demanding for both the camera and the software managing it. This time, all three iPhones did very well, although we can identify aspects that differentiate them. The iPhone 6s is the only camera that has not introduced any visible chromatic aberrations (the delicate purple tint near the very light and dark border areas) which can be seen on the other two photos, especially the one shot with iPhone 6. It was also able to reproduce all the details of chiaroscuro, providing clear information about the details in the darker parts of the picture. The 5s generation, in turn, faithfully recreated the subtle shades accompanying the setting sun. The two other cameras provided a somewhat bolder approach in terms of lighting up the shadows and illuminating shaded bush leaves. Maybe it looks more attractive, but it’s not quite how it looked to the eye when the picture was being taken. The iPhone 6 faithfully showed the colors of illuminated leaves, presenting the appropriate variety of greens.

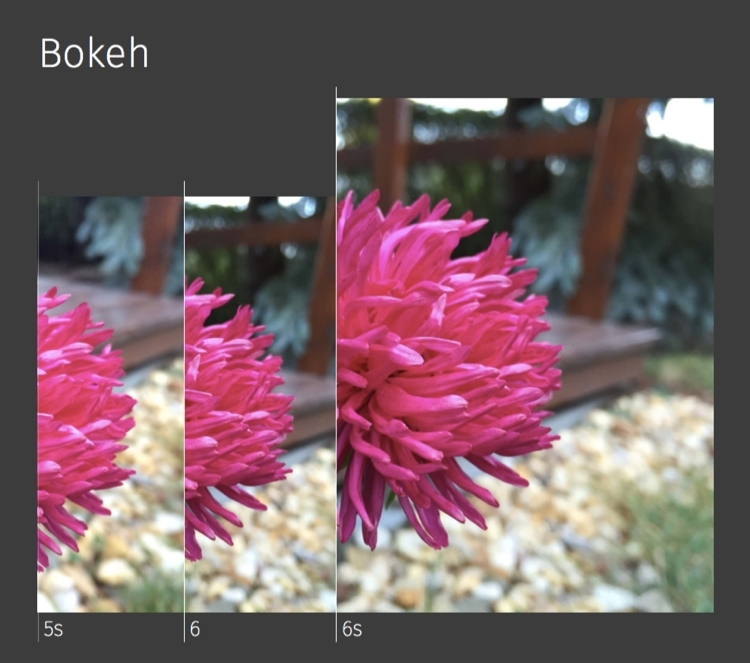

This artifact of so-called depth of field, which blurs the part of the scene outside of our interest, is influenced by many factors but the most important are the aperture size of the lens used and the distance from the subject. All three tested devices have the same fixed aperture - f/2.2. There are probably some minimal differences in their lenses. Test shots were made on the same subject at the same distance, so the background in them should be equally blurred, too. The tests show that what is common to all of the tested devices is only their ability to blur the background — the quality of the bokeh is an individual camera feature. The 5s camera as usual adds noise to the background and washes out colors so that they appear as they would through a dusty window pane. The iPhone 6 can do better than this, but it has a slight tendency to mix the neighboring spots, giving them a shapeless form. The iPhone 6s camera did the best with this part of the test, reproducing nearly SLR-grade bokeh.

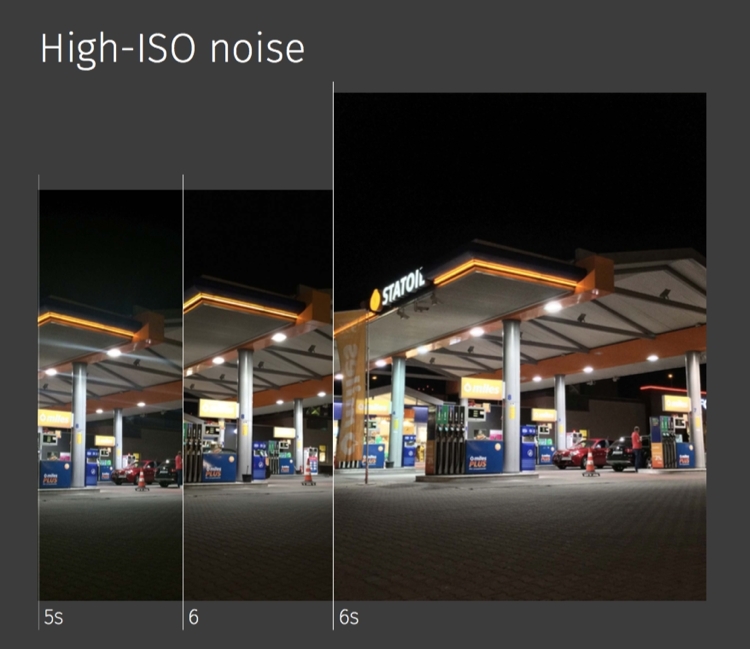

In this category, the iPhone 5s camera noise in low lighting conditions once again takes its toll. This phenomenon has unfortunately also affected the color reproduction, level of details, and the number of unwanted artifacts (as the JPG compression algorithm is trying to "save" the noise it introduces pseudo-random artifacts in the output file). The amount of detail in underexposed areas proved to be best with the iPhone 6s camera, reached somewhat at the expense of slight grain, which fortunately is of much higher "quality" on this model and does not seriously degrade the picture. The iPhone 6 appeared to be the best at reproducing the blacks, but had some problems with contrast parts (illuminated signs are overexposed and unreadable).

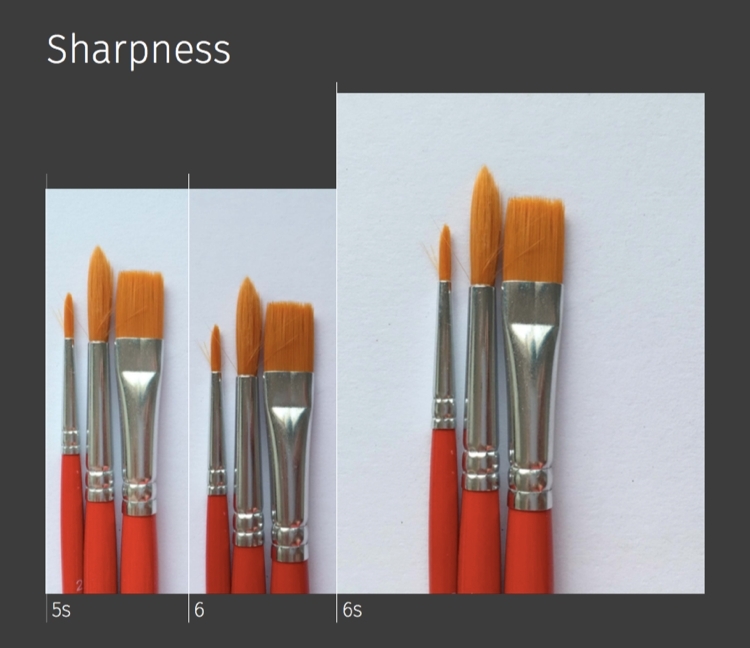

Image sharpness is the camera's ability to maintain fine details of objects such as hair or fur, which can be lost through excessive noise reduction or sharpening. The iPhone 5s and 6 cameras, although using sensors with the same pixel resolution, do not show the same level of detail. The iPhone 5s image makes it difficult to differentiate the brush hair, as if shadow and detail data have been lost in the process of saving the picture file. The iPhone 6 coped with this task almost as well as the iPhone 6s did, perhaps due to its smaller sensor, but larger pixels (8MP / 1.5µm in the iPhone 6 and 12MP / 1.22µm in the iPhone 6s).

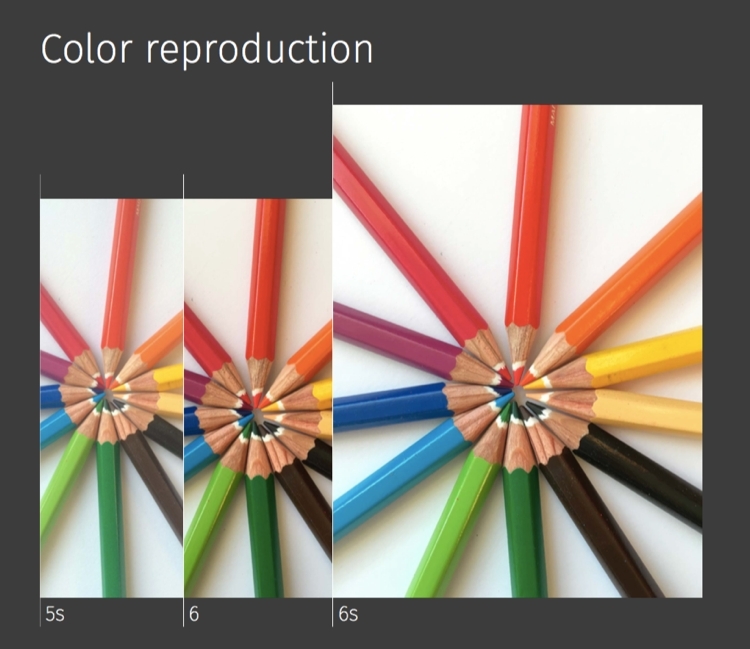

If any iPhone 5s owners had concerns about the validity of upgrading their phone to an iPhone 6 or 6s up to this point, this image should dispel any doubts. The differences between the 5s and the other two models are just plain devastating to the the 5s model, from the color quality to focus, the wood texture fidelity, and the contrast of the entire scene. Interestingly, the last two generations of iPhone cameras have achieved quite similar results (of course, if we ignore the differences between their sensors), making the iPhone 6 still an attractive alternative to the iPhone 6s in terms of its photographic capabilities.

With each step along the development of the iPhone camera, Apple has tried to solve different problems related to automatic creation of panoramic images. The most important factors here are to ensure consistent brightness, contrast and saturation for the output file and to make the stitching areas as invisible as possible. While the first challenge was overcome by Apple a long time ago, the image stitching is still an issue as shown in the photos taken for this part of the test. The “joints” seen on the pictures show different approaches to trying to resolve this problem, with stitches and artifacts resulting from the joining process visible for all three generations of Apple smartphone. Of course, a more serious approach to taking a panoramic picture would require using a tripod with a rotating head which probably would improve the output result of the joining algorithm and the overall final effect. Let’s be clear about it, though: not many people with iPhones in their pockets carry such equipment, and instead use themselves as a “tripod” most of the time. That’s why I decided to handhold the iPhones to perform this part of the test.

Due to the larger pixel count, the iPhone 6s saves much larger panoramic image files than its predecessors (63 MP for the iPhone 6s vs. 43 MP for iPhone 6 and 28 MP for iPhone 5s), which means not every application can handle an image of this size.

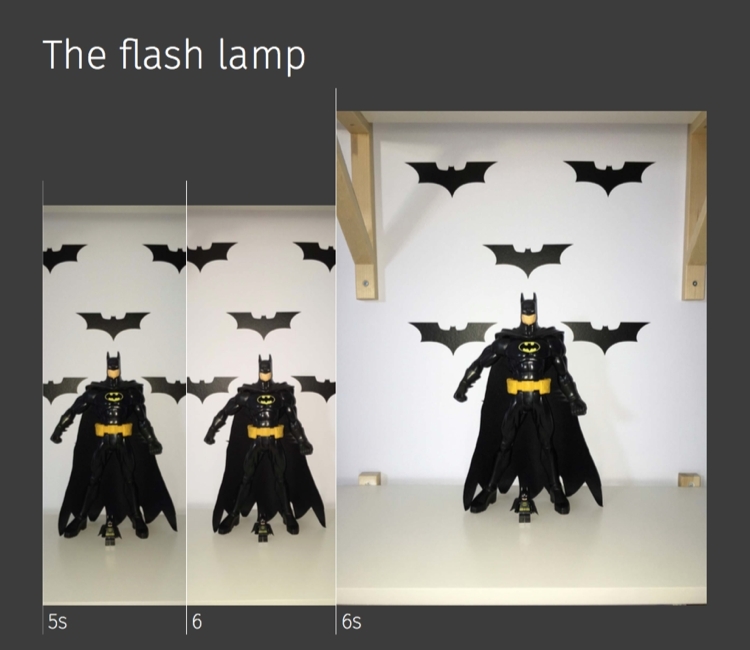

The "True Tone" flash introduced in the iPhone 5s consists of two LEDs: cool white and amber. During the so-called pre-flash, the color temperature of the scene is determined and set by choosing the color proportions of both flash LEDs. Apple says the flash can fire with over 1,000 different color temperatures, which should be sufficient for the vast majority of everyday situations and types of lighting. This does not mean that the two elements give twice as much light, but only that the temperature of the flash is chosen so that it lights up the scene as naturally as possible.

During testing, I found that the iPhone 6 and 6s flashes illuminate the scene a little better than the original 5s version, which may mean that some changes and improvements have been made along the way. It is not known whether that’s the result of greater power and improvements made within the flash or due to increased sensor sensitivity in the newer iPhone models. It also appears that the flash algorithm for choosing the color temperature has been revised in the latest generation of iPhone, because the picture made with this model appears to have the best representation of the actual colors of the scene (both the iPhone 5s and 6 made the image slightly warmer than in reality). Blacks are represented the worst in the photo taken with the iPhone 6 (appearing more like dark grays), while the iPhone 5s and 6s did much better here, with the former surprisingly performing the best.

As mentioned earlier, Apple introduced a front flash solution called Retina Flash in the iPhone 6s. The explicit target of the feature are people taking a lot of self-portraits, commonly called "selfies”. Looking at my test selfies taken with previous models at 100-percent scale I was about to shout "God, how ugly I am!". But then what I saw in the selfie taken with 6s made me almost burst into tears - thanks to the front sensor being bumped up from 1.2MP to 5MP, I could suddenly see all the imperfections of my skin and face. I warn you: do not buy the iPhone 6s if you do not want to find out the same about yourself ;). Fortunately, the Retina Flash brightened up my face, magically smoothing the skin and causing the next 6s selfie to be cool again :).

What is constantly noticeable after the release of each new iPhone generation is the fact that Apple greatly cares for continuous camera development in its smartphone. The fact that the camera is always Apple’s “icing on the cake” is always widely discussed and commented upon. Some new features are applauded, while some are booed. Nevertheless, looking at the results of the above tests - lets be honest - if we leave out the larger sensors, Live Photos, Retina Flash and the possibility of recording 4K material, the everyday photography differences between the iPhone 6 and 6s are rather… symbolic. The real revolution awaits the owners of the iPhone 5s and older models, where they’ll benefit from improvements ranging from faster and more accurate autofocus to minimal noise and beautifully rendered vivid colors. If you use the iPhone 6 and you don’t feel a sudden desire to immerse in the magic world of Live Photos wizardry, you can safely wait with the rest of the Muggles for the next Apple smartphone generation — just as I’ll do. Maybe the next iPhone will be even more magical?

The archive of all photos taken during this test can be found here:

They come as unedited and raw original files as saved by the tested cameras.

This article was previously published in MyApple Magazine No. 2/2015